Quick Start

Docker Compose Deployment (Recommended)

Prerequisites

- Linux host (Ubuntu 22.04+ / Debian 12+)

- Docker Engine 28+, Docker Compose v2

- At least one egress IP (proxy server)

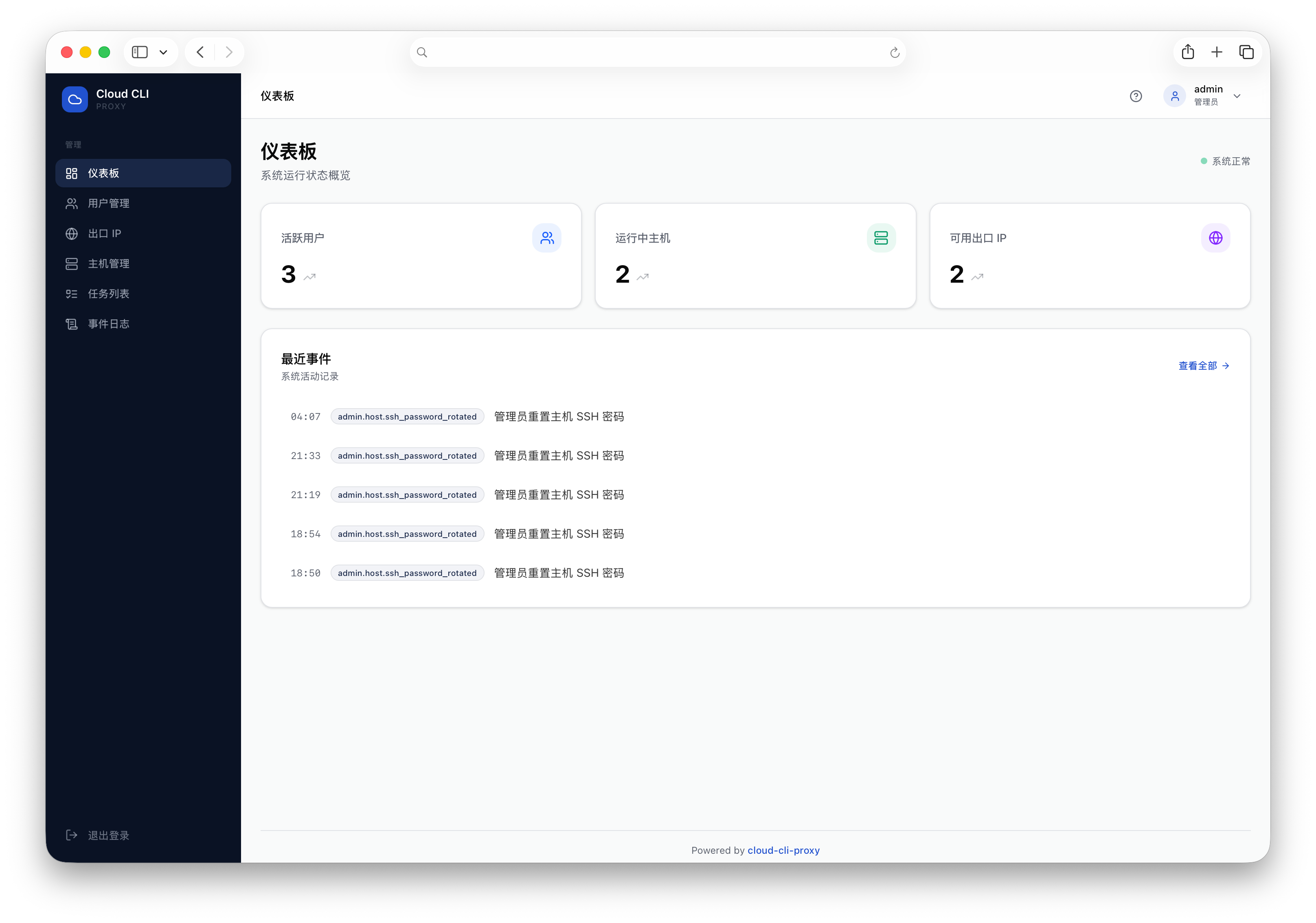

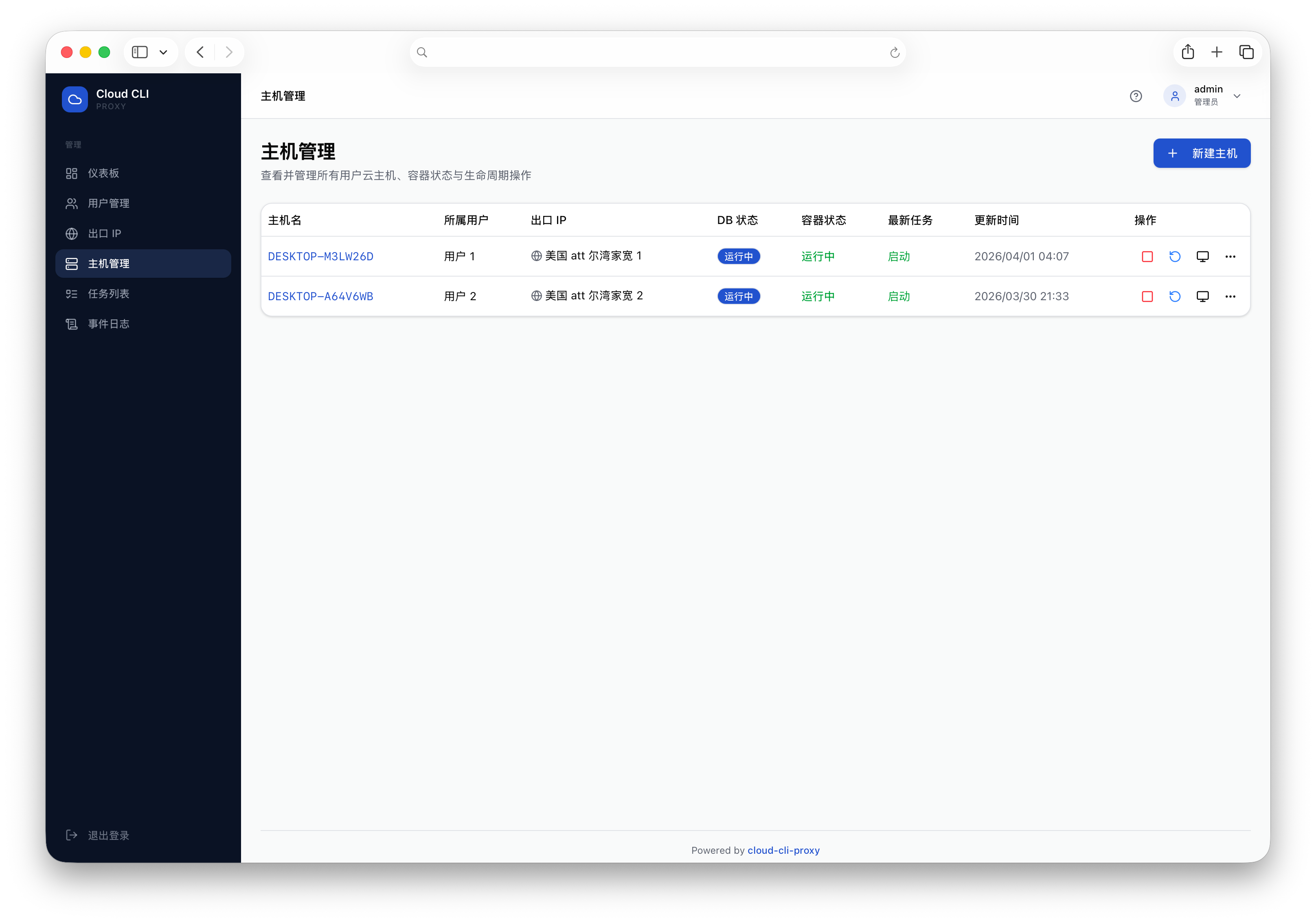

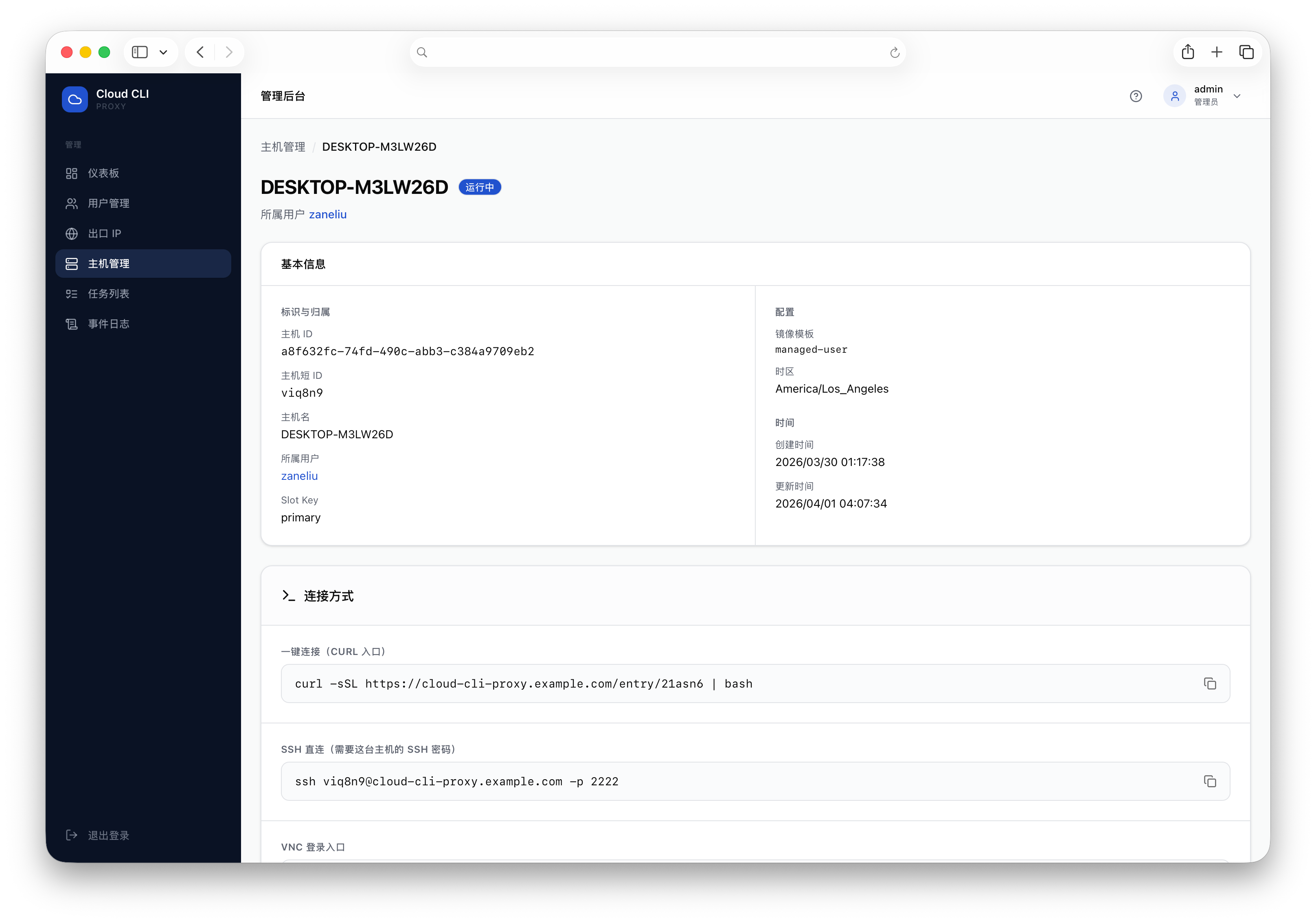

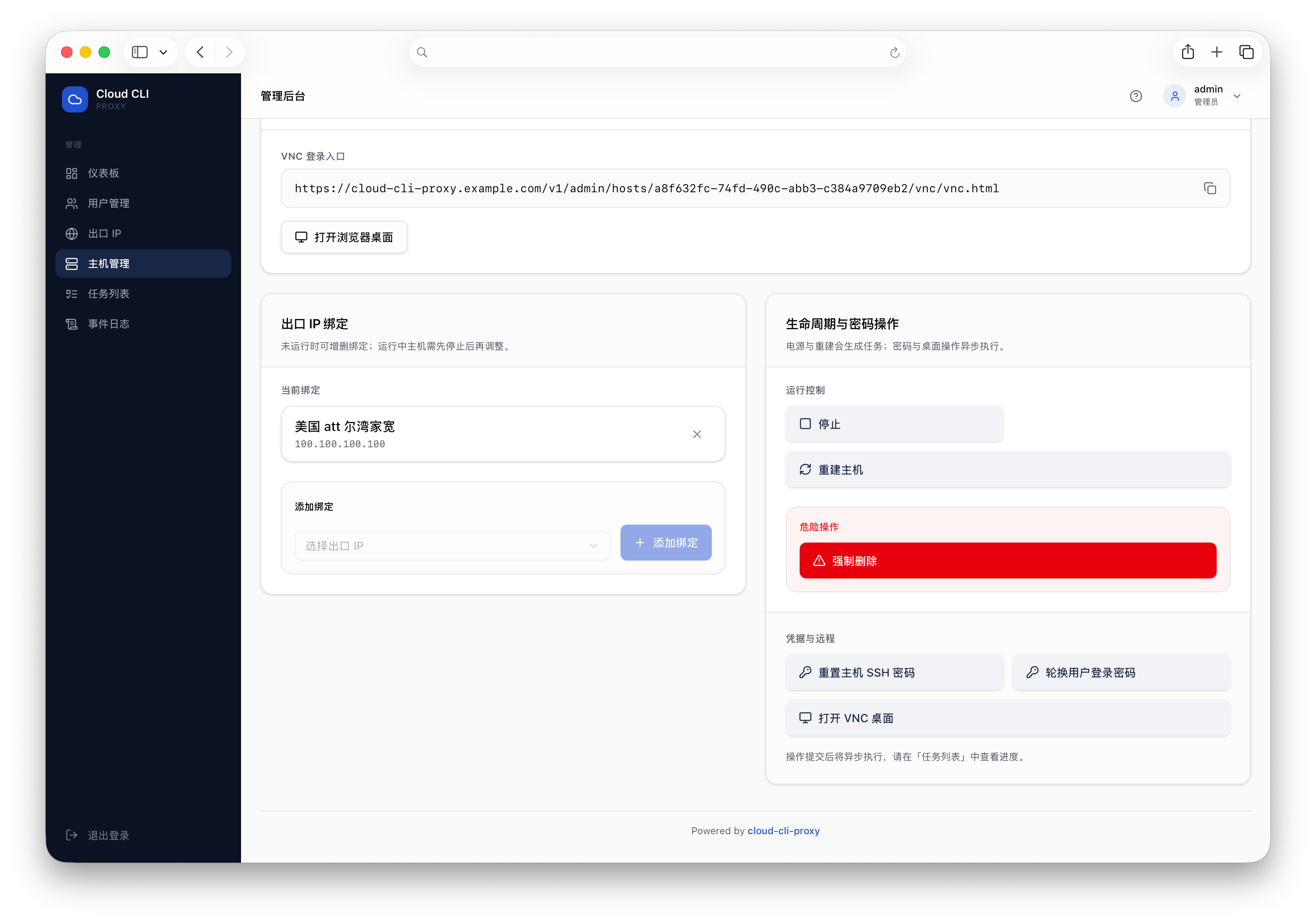

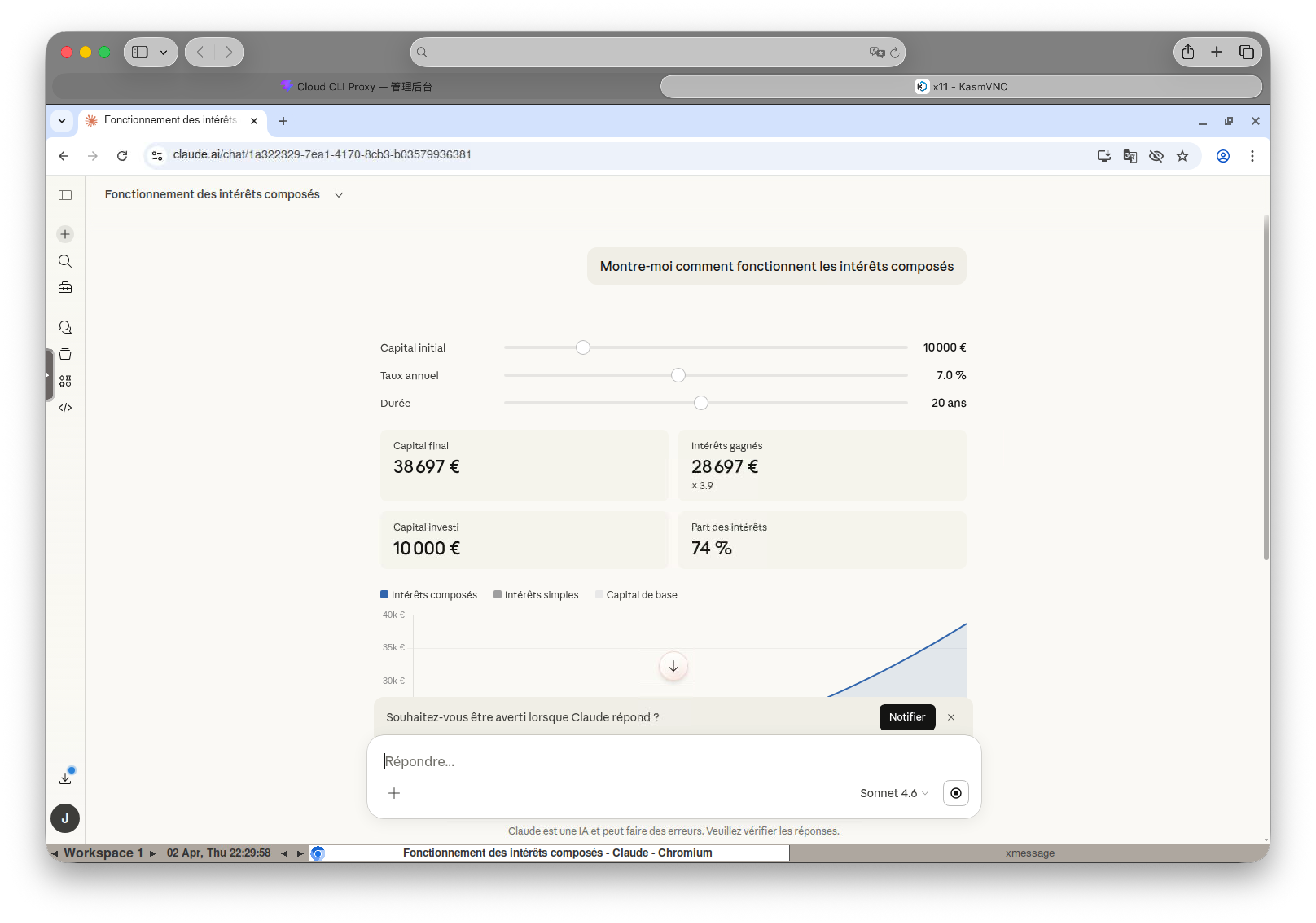

UI Preview

The screenshots below are from repository

imgs/, so you can see the admin and user experience before deploying.

Dashboard Overview

Host Management List

Host Detail and Access Entry

Lifecycle and Network Operations

Browser Remote Desktop (KasmVNC)

Step 1: Clone

git clone https://github.com/ZaneL1u/cloud-cli-proxy.git

cd cloud-cli-proxyStep 2: Generate Environment Config

Run the setup script to auto-generate all passwords and secrets:

bash deploy/scripts/setup-env.shChoose a database mode:

- Built-in Docker PostgreSQL (recommended): auto-generates DB password, managed by Docker Compose, zero config.

- External PostgreSQL: interactively enter your DB host, port, credentials, with SSL support.

Both options auto-generate an admin password (20 chars) and JWT secret (48 chars).

Important

The script displays the admin password once. Save it immediately!

Step 3: Start Services

Default recommendation: prefer prebuilt images (latest) for faster and consistent CI-aligned deployment.

# Built-in Docker PostgreSQL

docker compose pull

docker compose up -d

# External PostgreSQL (skip built-in DB)

docker compose pull control-plane admin

docker compose up -d control-plane adminOptional local source build:

docker compose -f docker-compose.yml -f docker-compose.build.yaml --profile build-only build --no-cache

docker compose -f docker-compose.yml -f docker-compose.build.yaml up -d --force-recreateStep 4: Verify

curl http://127.0.0.1:8080/healthz

# {"status":"ok","checks":{"database":"ok","agent":"ok"}}Service endpoints:

- API:

http://YOUR_HOST:8080 - Admin dashboard:

http://YOUR_HOST:3000 - SSH proxy:

YOUR_HOST:2222

Provisioning Users

Five steps: login → add egress IP → create user → create host & bind → send connection command.

1. Get Admin Token

Log in via the admin dashboard, or use the API:

TOKEN=$(curl -s -X POST http://YOUR_HOST:8080/v1/auth/login \

-H "Content-Type: application/json" \

-d '{"username":"admin","password":"your-admin-password"}' | grep -o '"token":"[^"]*"' | cut -d'"' -f4)2. Add Egress IP

Egress IPs use sing-box tun full-tunnel with tunnel_type set to proxy; configure the upstream in proxy_config (sing-box outbound).

Supports 6 protocols — SOCKS5, VMess, VLESS, Shadowsocks, Trojan, HTTP.

# Shadowsocks example

curl -s -X POST http://YOUR_HOST:8080/v1/admin/egress-ips \

-H "Authorization: Bearer $TOKEN" \

-H "Content-Type: application/json" \

-d '{

"label": "jp-ss-01",

"ip_address": "198.51.100.5",

"tunnel_type": "proxy",

"provider": "manual",

"proxy_config": {

"type": "shadowsocks",

"server": "198.51.100.5",

"server_port": 8388,

"method": "aes-256-gcm",

"password": "your-ss-password"

}

}'# SOCKS5 example

curl -s -X POST http://YOUR_HOST:8080/v1/admin/egress-ips \

-H "Authorization: Bearer $TOKEN" \

-H "Content-Type: application/json" \

-d '{

"label": "us-socks-01",

"ip_address": "192.0.2.50",

"tunnel_type": "proxy",

"provider": "manual",

"proxy_config": {

"type": "socks",

"server": "192.0.2.50",

"server_port": 1080,

"username": "user",

"password": "pass"

}

}'Test egress IP connectivity:

curl -s -X POST http://YOUR_HOST:8080/v1/admin/egress-ips/{ipID}/test \

-H "Authorization: Bearer $TOKEN"Tests connectivity, exit IP match, and DNS leak detection.

3. Create User

curl -s -X POST http://YOUR_HOST:8080/v1/admin/users \

-H "Authorization: Bearer $TOKEN" \

-H "Content-Type: application/json" \

-d '{

"username": "zhangsan",

"password": "initial-password-for-user",

"expires_at": "2026-12-31T23:59:59Z"

}'4. Create Host & Bind Egress IP

Create host:

curl -s -X POST http://YOUR_HOST:8080/v1/admin/hosts \

-H "Authorization: Bearer $TOKEN" \

-H "Content-Type: application/json" \

-d '{"user_id": "user-uuid"}'Bind egress IP:

curl -s -X POST http://YOUR_HOST:8080/v1/admin/bindings \

-H "Authorization: Bearer $TOKEN" \

-H "Content-Type: application/json" \

-d '{"host_id": "host-uuid", "egress_ip_id": "egress-ip-uuid"}'TIP

A host requires at least one bound egress IP to start.

5. Send to User

After the host is created, an egress IP is bound, and the task shows the container is ready, copy access info from the host detail page in the admin UI.

Option A: One-liner SSH (classic)

Send the user (replace YOUR_HOST and SHORT_ID with the public gateway and the host short ID):

curl -sSf http://YOUR_HOST/entry/SHORT_ID | bashOr the bootstrap flow (asks for username):

curl -sSf http://YOUR_HOST:8080/v1/bootstrap/script | bashOption B: cloud-claude (recommended)

In addition to the curl command, share these three values (as shown in the admin UI):

| Field | Meaning |

|---|---|

| Gateway URL | Public HTTPS base URL of the control plane, e.g. https://gw.example.com (same origin as the admin UI in the browser; usually not the :3000 dev frontend port) |

| Short ID | Host short ID from the host detail page. If the user configures a user short ID instead, they connect to that user’s primary host |

| Password | The user’s password from the admin dashboard |

After installing cloud-claude and running init once with those values, the user runs cloud-claude from their project directory; the cwd is sshfs-mounted at the same path in the container. By default git runs on the laptop (tune with proxy_commands).

User Access

Share this section directly with your users.

Option 1: cloud-claude Local CLI (Recommended)

Install

Homebrew (macOS / Linux, recommended):

brew tap ZaneL1u/tap

brew install cloud-claudeOne-liner (any platform):

curl -fsSL https://raw.githubusercontent.com/ZaneL1u/cloud-cli-proxy/main/scripts/install.sh | bashOr download the matching archive from Releases, or go build ./cmd/cloud-claude.

First-time setup (once)

cloud-claude initPrompts: Gateway URL, Short ID (host or user), Password → ~/.cloud-claude/config.yaml.

Flags or environment variables:

cloud-claude init --gateway https://gw.example.com --short-id abc123 --password your-password

export CLOUD_CLAUDE_GATEWAY=https://gw.example.com

export CLOUD_CLAUDE_SHORT_ID=abc123

export CLOUD_CLAUDE_PASSWORD=your-password

cloud-claude initConnect and run Claude Code

cd ~/your/project # directory to mount into the container

alias claude=cloud-claude # optional

cloud-claude

cloud-claude -p "refactor this function"Session management: By default attaches the existing tmux session for the same account; disconnects do not lose the workspace:

cloud-claude # default: attach existing session (multi-client)

cloud-claude --new-session # force a new isolated session

cloud-claude --take-over # take over the primary session and detach others

cloud-claude sessions # list current tmux sessions

cloud-claude sessions --attach 0 # attach a specific sessionMount modes: Auto mode picks the best strategy; you can also specify manually:

cloud-claude --mount-mode=auto # default: HotSync preferred, falls back to SSHFS

cloud-claude --mount-mode=full # HotSync + SSHFS dual-track (full features)

cloud-claude --mount-mode=sshfs-only # SSHFS only (compatibility first)Self-checks and troubleshooting:

cloud-claude doctor # full five-domain check (network / auth / ssh / mount / disk)

cloud-claude doctor mount --fix # mount-only check with auto-repair

cloud-claude explain MOUNT_SSHFS_DISCONNECTED # query error code details and remediation

cloud-claude env check # verify remote timezone, locale, egress IP, FUSE, etc.Environment variables:

CLOUD_CLAUDE_NO_PROMOTION=1— disable cold-file read promotion (enabled by default on Linux; skipped on macOS)- Set

proxy_commandsin~/.cloud-claude/config.yaml(list of command names to run on the host). Default isgitonly; use an empty list to disable. hot_sync_max_file_mb— per-file throttling threshold (default 50MB); files larger than this fall back to the cold path.

When you run cloud-claude, it: (1) authenticates; (2) waits for the container; (3) sshfs-mounts the cwd at the same path in the container; (4) starts Claude Code remotely. Terminal size, signals, and exit codes are forwarded; network jitters auto-recover within 30s with buffered input surviving reconnections.

Error codes:

| Exit Code | Meaning | Action |

|---|---|---|

| 1 | Auth failed | Check Short ID and password |

| 2 | Network error | Check gateway URL is reachable |

| 3 | Timeout | Container startup timeout, contact admin |

| 4 | Config error | Run cloud-claude init to reconfigure |

Option 2: curl + SSH Access

Run the command your admin provided:

curl -sSf http://YOUR_HOST/entry/abc123 | bashEnter your password and you'll be in your cloud host within seconds.

Pre-installed Tools

| Tool | Description |

|---|---|

| Claude Code | AI coding assistant — just run claude in terminal |

| KasmVNC + Chromium | Browser remote desktop, accessible via the admin dashboard |

| Git | Version control |

| tmux | Terminal multiplexer, sessions survive disconnects |

| zsh | Enhanced shell experience |

| Node.js | JavaScript runtime |

Using Claude Code (via SSH)

Once inside your cloud host, just run:

claudeAll Claude API requests are automatically routed through the admin-designated exit IP. No proxy configuration needed.

Reconnecting

If your SSH connection drops, re-run the same curl command to reconnect. Your container keeps running.

Rebuilding

If you need to reset your environment, click "Rebuild" in the admin dashboard. This recreates the container but preserves your home directory data.

Local Source Development (From Clone)

If you want to contribute or customize behavior, use this local development flow.

1. Install Dependencies

- Git

- Go

1.25.7+ - Node.js

20+(recommended withcorepackenabled) - pnpm

10+ - Docker Engine + Docker Compose v2

- GNU Make

2. Clone Repository

git clone https://github.com/ZaneL1u/cloud-cli-proxy.git

cd cloud-cli-proxy3. Initialize Dependencies and Environment

make setupThis installs frontend dependencies and auto-creates .env from .env.example when missing.

4. Start Local Database

make dbThe default local PostgreSQL endpoint is 127.0.0.1:5433.

5. Start Dev Mode

make devAfter startup:

- Admin frontend:

http://localhost:2568 - Control Plane API:

http://127.0.0.1:8090

6. Verify and Run Tests

curl http://127.0.0.1:8090/healthz

make testCommon Commands

make dev-api # backend only

make dev-web # frontend only

make db-stop # stop local database

make db-reset # recreate local database

make help # list all commandsNext Steps

- Deployment Guide — systemd native deployment

- Configuration — Environment variables and networking

- API Reference — Full Admin API docs

- FAQ & Recovery — Troubleshooting and disaster recovery